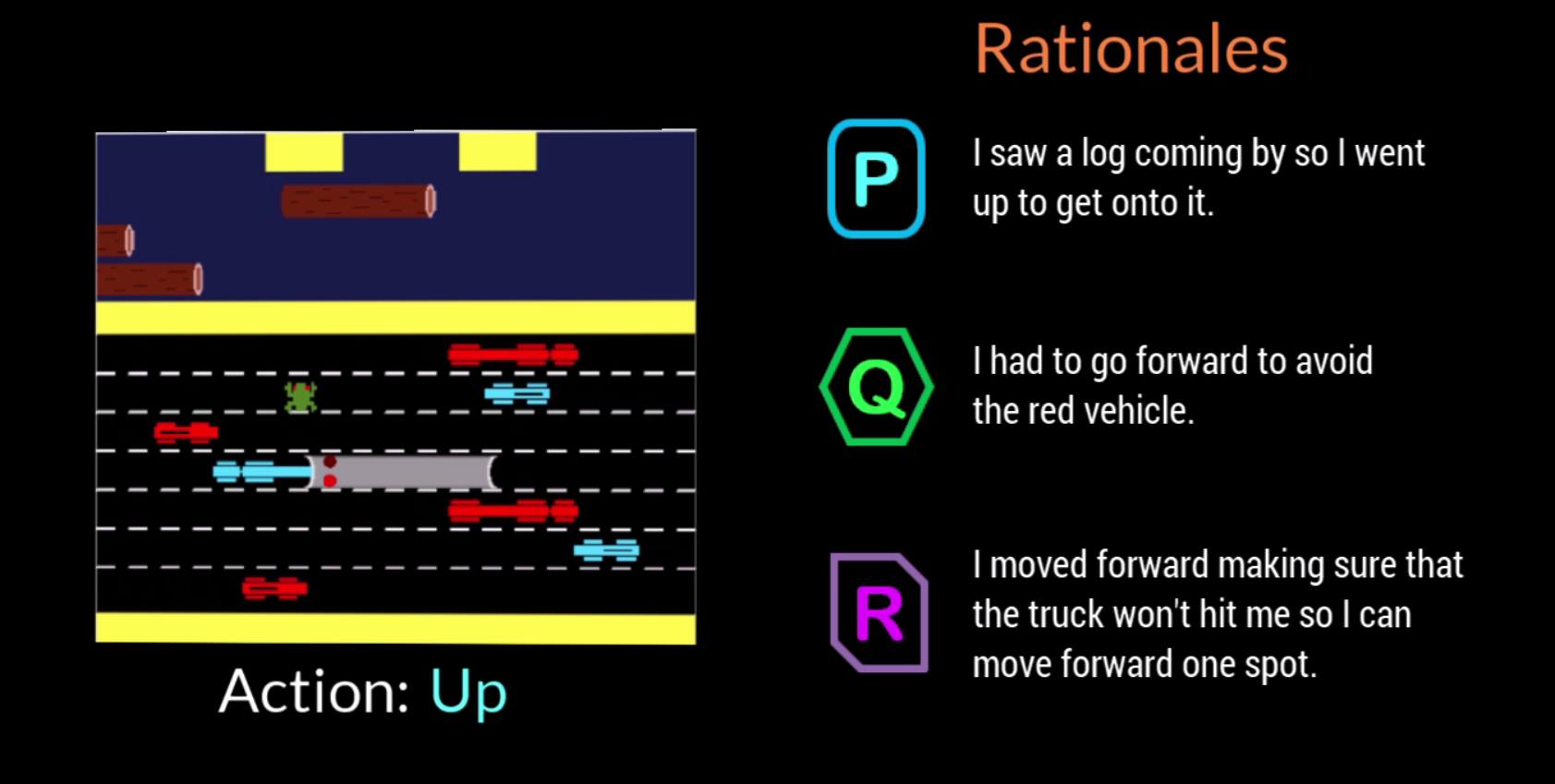

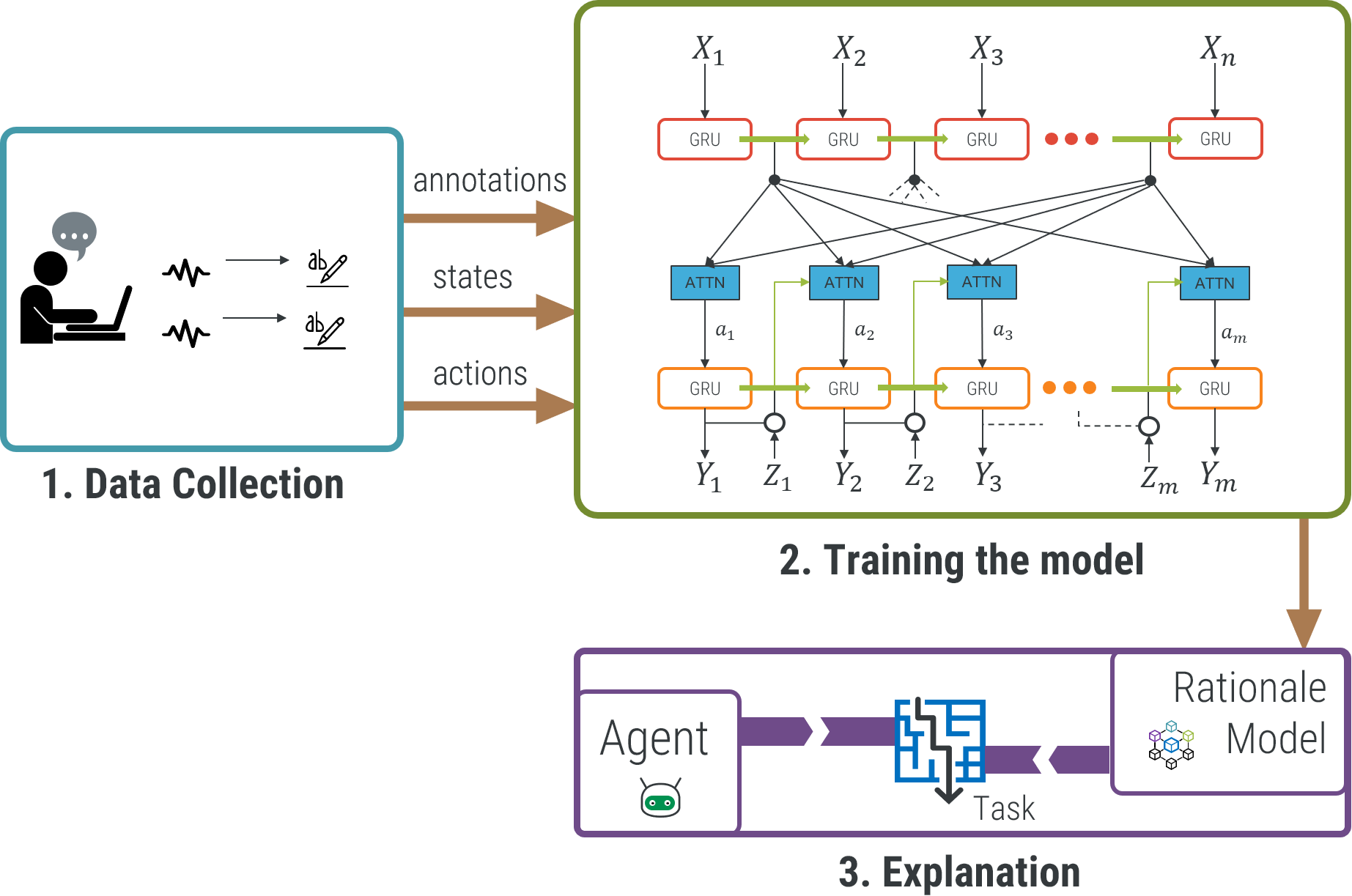

As AI and machine learning techniques become more sophisticated and are granted more autonomy, the chances that they make decisions that surprise us increase. In these situations, it is not unusual for people to become skeptical or distrustful of an AI or autonomous system. In this project, we explored how we could make AI and machine learning systems more approachable to humans by giving the ability to provide humanlike “rationales” for their behaviors.

An AI rationale is an explanation for behavior that mimics a justification that a person would give for a similar behavior should they have done it. In this project, we use sequence to sequence networks to learn associations between an autonomous agent’s world information and human explanations gathered from people who were placed in similar situations. Results have shown that these deep learning techniques can learn to generate rationales that are very similar to what a human would produce.

Associated Publications

- Upol Ehsan, Pradyumna Tambwekar, Larry Chan, Brent Harrison, and Mark O. Riedl. Automated Rationale Generation: A Technique for Explainable AI and its Effects on Human Perceptions. In Proceedings of the ACM Conference on Intelligent User Interfaces. 2019.

- Brent Harrison, Upol Ehsan, and Mark O. Riedl. Rationalization: A Neural Machine Translation Approach to Generating Natural Language Explanations. AAAI/ACM Conference on AI, Ethics, and Society, New Orleans, LA, 2018.

- Upol Ehsan, Pradyumna Tambwekar, Larry Chan, Brent Harrison, and Mark O. Riedl. Learning to Generate Natural Language Rationales for Game Playing Agents. In Proceedings of the Workshop on Experimental AI in Games (EXAG). Edmonton, Alberta, Canada. 2018.

Associated Press Releases

- http://www.sciencemag.org/news/2017/07/how-ai-detectives-are-cracking-open-black-box-deep-learning

- https://www.eurekalert.org/pub_releases/2019-04/giot-aao041119.php

- https://eandt.theiet.org/content/articles/2019/04/ai-agent-talks-through-its-decisions-in-simple-english/

- https://www.engr.uky.edu/research-faculty/research/2019/explainable-artificial-intelligence-systems-brent-harrison